There is an old adage about the cobbler’s shoes; and for those of us that actually used to be cobblers, it really rings true. I spend a lot of time helping people optimize their networks, but over the years there have been times where I just deploy quickly and assume I will fix it later, but never do. This is one of those times.

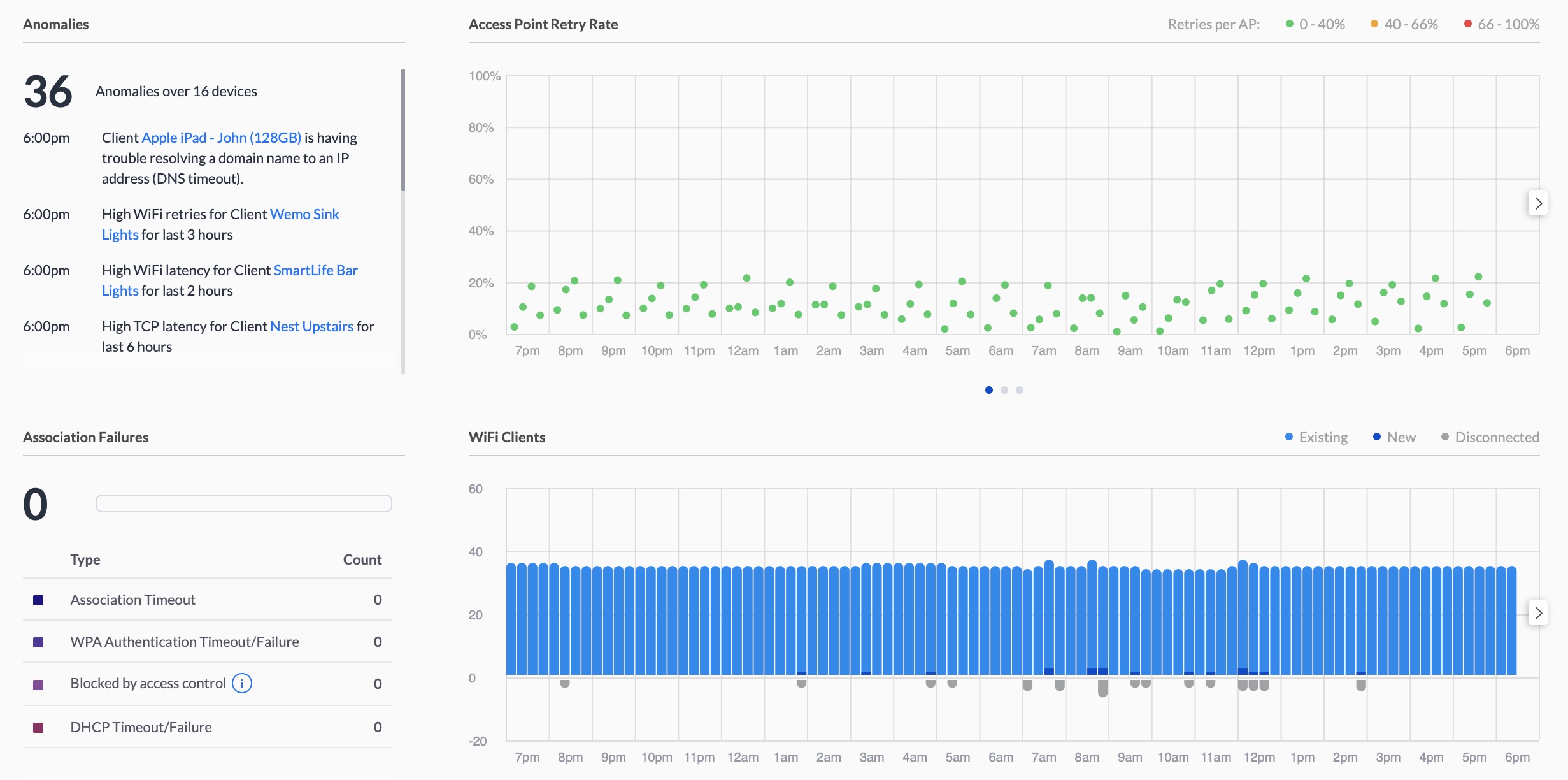

My house is 50 years old and the ship sailed a long time ago on cleanly installing network cables. A good portion of my cabling exists on the outside of the house. Luckily the external cabling is all outdoor rated and properly installed, but to get the Ethernet from one end of the house to the other required hopping from multiple switches. My dashboard every morning highlighted anywhere between 80 and 100 anomalies and between 8 and 10 network association errors. While this is not necessarily a deal breaker for performance, when other issues pop up, your gut tells you that some of this might be related.

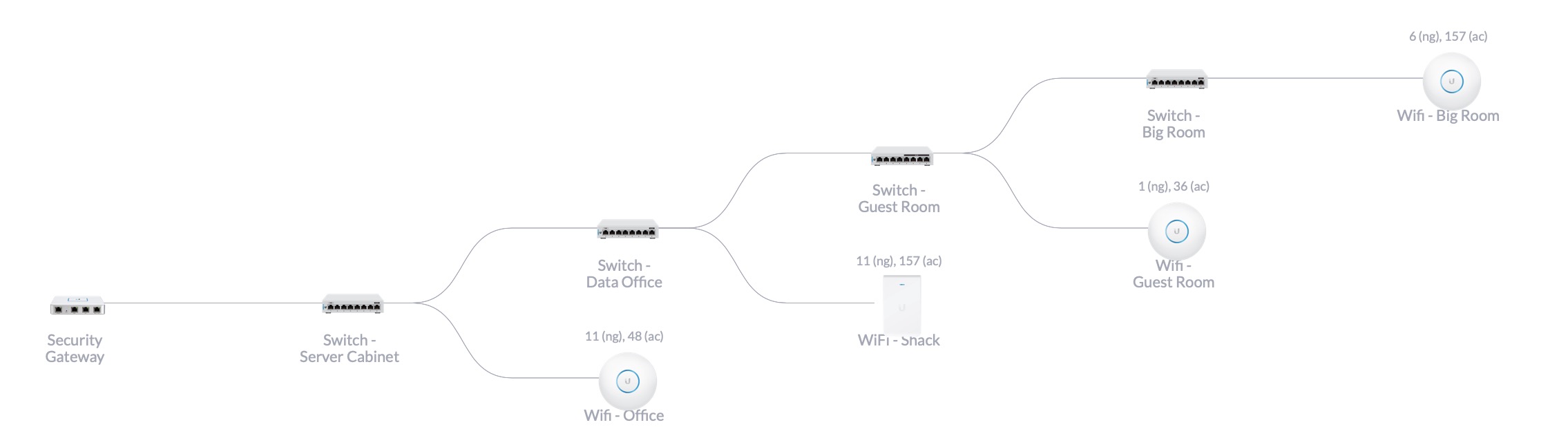

Here is a snapshot of what my network looked like:

As you can see, it all *works*, but the cascading is not optimal. There are some VLANs that exist only between some particular ports, and the way this way configured required me to run all of the traffic up all of the trunks back to the primary switch, even though I was trying to keep the traffic off of particular switches.

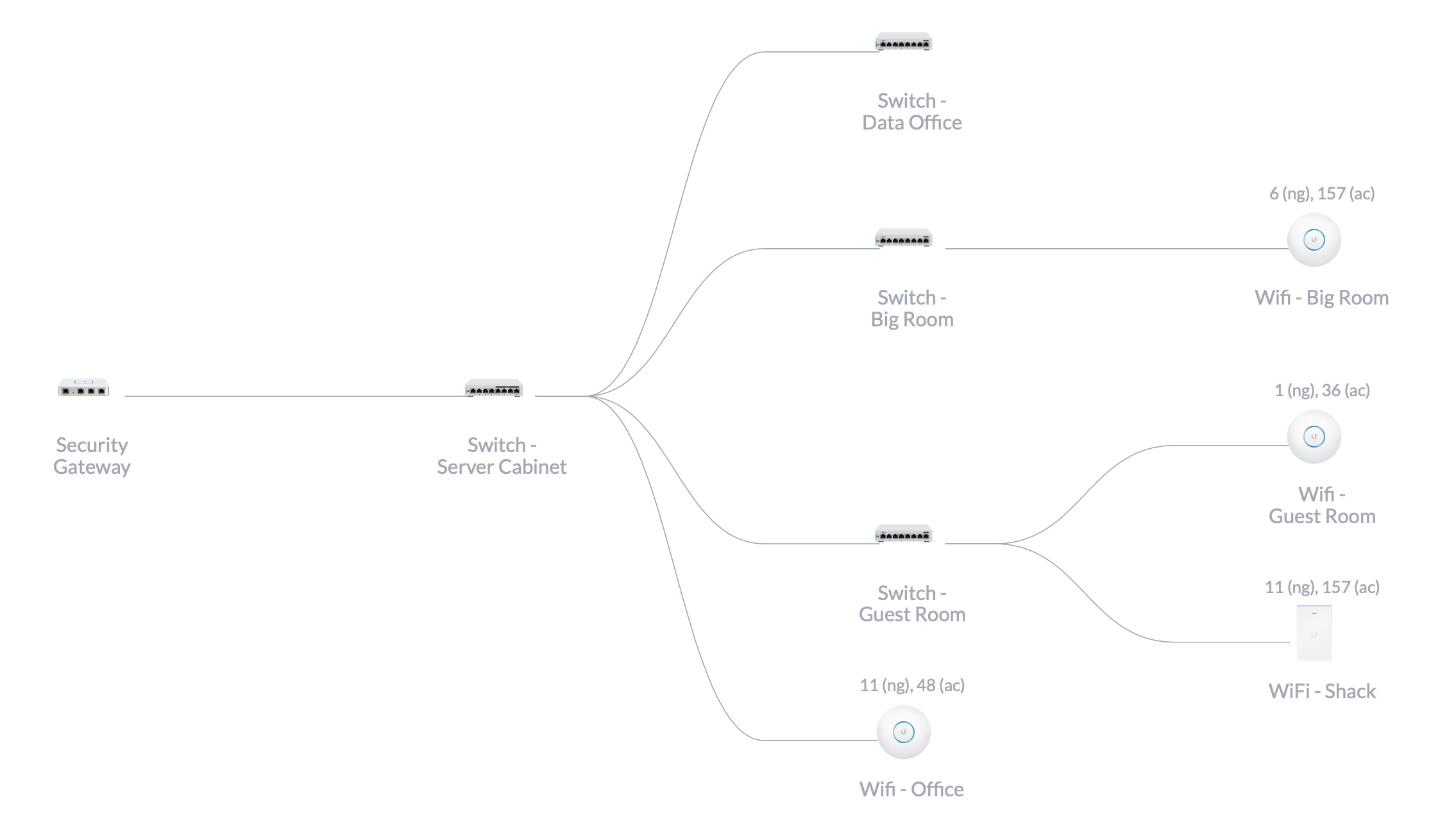

By spending some time recabling and actually just using some patch cord jumpers to extend cables instead of just terminating them at the switch, I was able to extend the furthest out switch (the Big Room) all the way back to the office instead of making a pit stop at the guest bedroom.

Once the rewiring was completed, the last step was setting up the port configurations as almost everything changed. For the primary switch I also set the RSTP to 4096 and for all other switches, the RSTP was set to 32768. While it is a small network, a couple servers, 5 clients (mostly Wi-Fi) and 4 switches, setting the RSTP up properly should help.

Here is a view of the new topology:

Once finished, there was a marked improvement from my perspective: